Introduction

This article describes the usage of Gemini to help write code for an application like Python or JavaScript. That’s a hands-on experience with Gemini for programming for the last few weeks, writing tools (small tools, not large tools).

This approach here is purely empirical (disclaimer).

Disclaimer

Again, this approach is empirical.

Issues:

The following issues I faced:

- Gemini seem to forget previous code settings – after a few iterations

- Gemini seem to omit/disregard previous code settings – let’s say: use certain environment variables

- Gemini seem to be particularly struggling with JavaScript, and much better with Python, possibly given discussions online – no benchmark to prove this true, however.

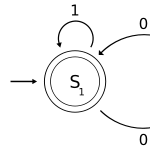

- Gemini is excellent in some problems, but goes into local maxima in some aspects – meaning it will get stuck in a loop

- Gemini will rewrite completely segments even if you just add a feature

- Gemini will omit some parts, either by mistake or not.

- Gemini will tend to send just sections, which is convoluted sometimes on merges and comparisons.

Good practices

I considered the following list useful:

- Interact with Gemini at the same level of knowledge – not more, not less

- Why not more: it can make mistakes, and if the user assumes it knows more than you, you won’t necessarily review the code

- Why not less: it can omit or be verbose (over-verbose), complicating the answer, expecting a certain outcome

- Establish rules and guidelines

- use a certain mode

- use certain indentation

- use certain architectural aspects (components)

- Establish a stable code – a version that is reliable to go back to. That’s an anchor.

- Work with Gemini combined with GitHub – for saving and updating, versioning.

- When getting into a local maxima – where the AI keeps guessing on a loop – you will be better-off completely disengaging and fully working by yourself.

Conclusion

This article discussed Gemini for usage of application development.

I hope this article helps with some useful insights and AI-assisted improvement and understanding for better performance.