Some people always ask what was the result of my thesis. So below I tried to summarize it.

In one sentece: An automatic method for comparing performance the data provided by tracers/profilers.

In technical terms: We used a non-supervised method, called auto-clustering to group similar performance using profiled data in a data structure. Those groups are compared later and the difference in terms of performance metrics is probably the root cause of the performance issue.

The main part of the thesis was presented in a conference [1].

Methodology

We chose to develop a non-supervised method, called auto clustering. The possibility to use an automated approach is more interesting for us, in comparison with non-automated methods, mainly because we aim not to use data to train the model. Therefore, we implemented a version of comparative k-means using the SSE (sum of square errors) variability information, plus a heuristic evaluation. This technique can be used for an arbitrary number of dimensions, since the amount of difference, SSE (sum of squared error), can be calculated in these cases.

Elbow method

One method to quantitatively measure the number of clusters is the elbow method. This method compares the sum of squared errors (SSE), considering several numbers of groups from the classification used.

The elbow method gives the possibility to use the SSE to find the elbow value, which can be defined as a value at which the SSE changes its behaviour abruptly. In our cases, the elbow value is when the SSE stop decreasing substantially.

However, the elbow method does not guarantee a perfect match in cases where the data is well distributed. Instead, the analysis of the SSE can give a smooth curve and the best value for the number of groups is not precisely defined. In cases like this, we developed another clustering based on the mean distance of the data.

Heuristic Evaluation

To compare the SSE values, we needed also to do a heuristic function which compares the different values of the SSE, to compute the Elbow.

Therefore, we use this approach to compare several runs of classifications and extract the one with the smallest squared errors. The heuristic used is to take an optimal group the biggest gap in an array of SSE values. We use a linear comparision described below speed O(n).

_________________________________________________________________________________

Algorithm for biggest gap evaluation: Algorithm to get the biggest gap given a sequence of numbers.

_________________________________________________________________________________

biggest_gap( [sequence]):

Input: Sequence of numbers, which are the SSE values

Output: the location of the biggest gap

gap= sequence[1] – sequence[0]

position = 1

i = 0

while i < length (sequence):

if (sequence[i] – sequence[i++] > gap):

gap = sequence[i] – sequence[j]

position = i

i++

return (position, gap)

Association among the Groups

The clustering of metrics is just one part of the approach since a rule of groups needs to be applied to find the specific cause for the discrepancy between the executions. To solve this problem, and find the cause of the difference, we applied a set association rule after the grouping mechanism. Therefore, using a set exclusion, we can find the metric that is responsible for the elapsed time.

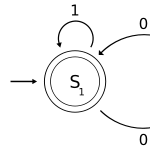

The association rule is illustrated in Figure \ref{fig:association}, which describes a metric X and the elapsed time comparison. The grouping on Metric x divides the data into two groups, and those groups are intrinsically related to the elapsed time group. \\

The association rule can be applied in an arbitrary classification algorithm with several different dimensions. Thus, the association can be defined as a heuristic to find the root cause of problems, using grouping or clustering algorithms.

Then we create a matrix of groups correlation can be constructed to better understand the relations among the groups.

Slides

Conference / citation

[1] Performance Analysis Using Automated Grouping Mechanisms. Isnaldo Francisco de Melo Junior, Abderrahmane B. and Prof Michel D. to be presented at the 2018 IEEE International Conference on Software Quality, Reliability & Security in Lisbon, July 2018