Intro

A bit before finishing my master, with the help of Prof Orlando Silva, from Mackenzie during his Neural Network classes.

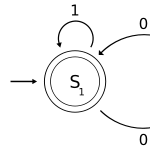

I started learning a bit of the Recurrent Neural Networks, aka RNN!

Not dead anymore

I was quite impressed with how powerful were they and the fact that the field stood still for so long time until recently.

So I tried learning it, from the basic to the more advanced. The math for me in the begin was difficult but after several book you kind of get use to it.

Resevoir Computing

Doing this small research I came to the Prof Murilo B, at Aberdeen University and his work with Reservoir Computing. He knew so many things and so many properties of those things.

I was impressed on how many things we could use it, from speech recognition to Chaos theory!!!!

Some properties

Since one can think about recurrent networks in terms of their properties as dynamical systems, it is natural to ask about their stability, controllability and observability:

Stability

concerns the boundedness over time of the network outputs, and the response of the network outputs to small changes (e.g., to the network inputs or weights).

Controllability

is concerned with whether it is possible to control the dynamic behavior. A recurrent neural network is said to be controllable if an initial state is steerable to any desired state within a finite number of time steps.

Observability

is concerned with whether it is possible to observe the results of the control applied. A recurrent network is said to be observable if the state of the network can be determined from a finite set of input/output measurements. A rigorous treatment of these issues is way beyond the scope of this module!

Git

I forked from Torch RNN library here [2], you should do the same!

Summary

I did a small summary of my research and if you have more interested it you can see it here[1]. It is very very small and summarized, i.e. 15/16 pages.

[1] https://docs.google.com/document/d/1fE_6TLHhz010YeYFa1g0SHfkDYWNzL8qFD5RoroY_rM/edit?usp=sharing